Reliable & Trustworthy LLMs: RagaAI Open-Sources the most comprehensive Platform for Evaluation & Guardrails

Reliable & Trustworthy LLMs: RagaAI Open-Sources the most comprehensive Platform for Evaluation & Guardrails

–News Direct–

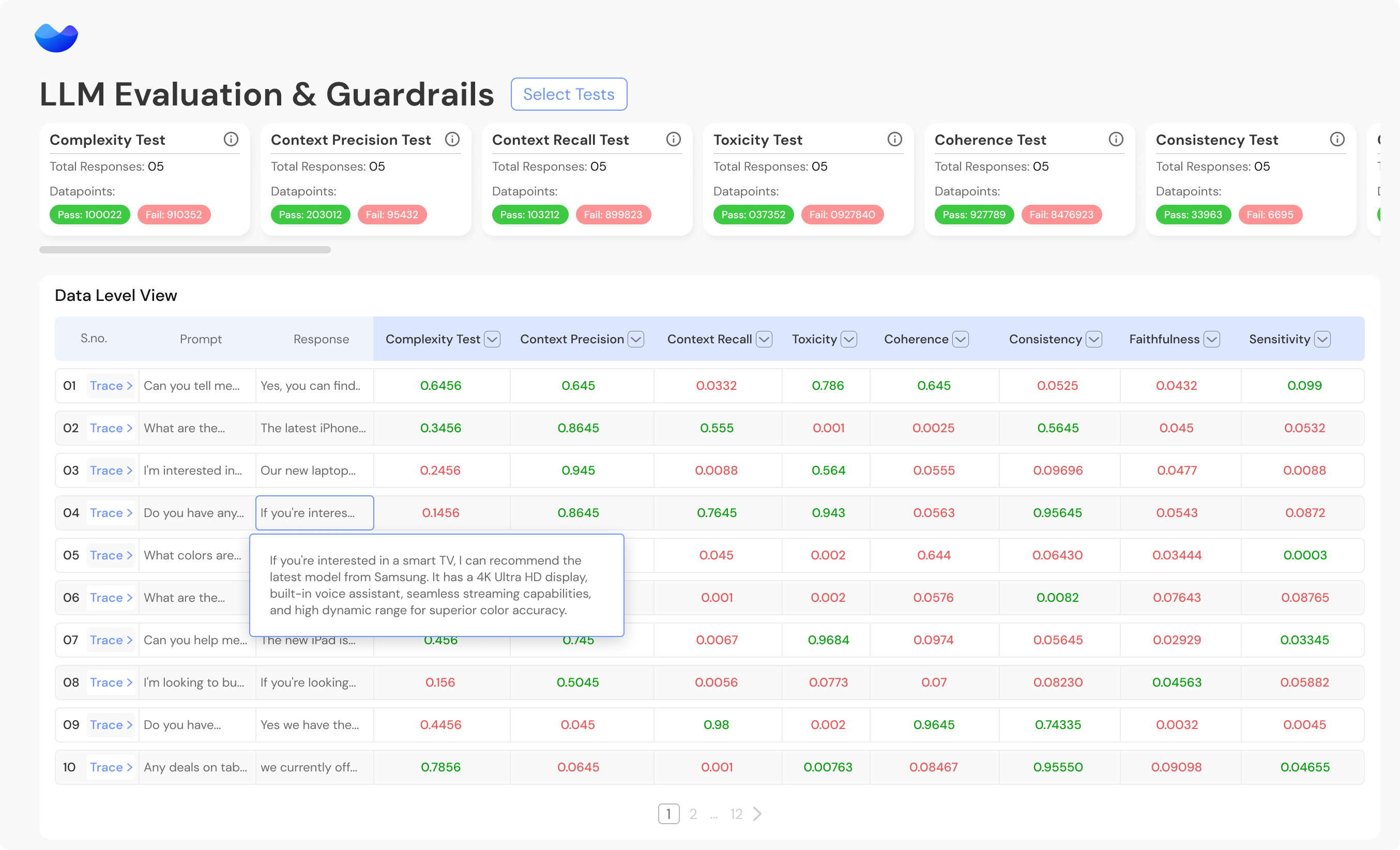

Today, RagaAI, the leading AI testing company, is significantly expanding its testing platform by launching RagaAI LLM Hub, its open source and enterprise-ready LLMs evaluation and guardrails platform. With over 100 meticulously designed metrics, it is the most comprehensive platform that allows developers and organizations to evaluate and compare LLMs effectively and establish essential guardrails for LLMs and Retrieval Augmented Generation(RAG) applications. These tests assess various aspects including Relevance & Understanding, Content Quality, Hallucination, Safety & Bias, Context Relevance, Guardrails, Vulnerability scanning, along with a suite of Metric-Based Tests for quantitative analysis.

The RagaAI LLM Hub is uniquely designed to help teams identify issues and fix them throughout the LLM lifecycle, be it a proof-of-concept or an application in production. From understanding the quality of datasets to prompt templating and the choice of LLM architecture or vectorDBs, RagaAI LLM Hub identifies issues across the entire RAG pipeline. This is pivotal for understanding the root cause of failures within an LLM application and addressing them at their source, revolutionizing the approach to ensuring reliability and trustworthiness.

"At RagaAI, our mission is to empower developers and enterprises with the tools they need to build robust and responsible LLMs," said Gaurav Agarwal, Founder and CEO of RagaAI. "With our comprehensive open-source evaluation suite, we believe in democratizing AI innovation. Together with the enterprise ready version, we've created a comprehensive solution to enable organizations to navigate the complexities of LLMs deployment with confidence. This is a game-changing solution that provides unparalleled insights into the reliability and trustworthiness of LLMs and RAG applications."

The RagaAI LLMs Hub is already utilized across industries like E-commerce, Finance, Marketing, Legal, and Healthcare, and the platform supports developers and enterprises in various LLM applications including chatbots, content creation, text summarization, and source code generation. For instance, one customer came to RagaAI after encountering hallucinations and incorrect outputs whilst developing a customer service chatbot. Leveraging RagaAI LLM Hub's comprehensive metrics, they pinpointed and quantified the hallucination issue in the RAG pipeline. Additionally, the platform adeptly identifies nuanced customer issues and recommends specific areas within the pipeline for resolution.

The RagaAI LLM Hub helps in setting guardrails, ensuring data privacy and legal compliance, including transparency regulations and anti-discrimination laws. It plays a vital role in promoting ethical & Responsible AI practices, such as in sensitive sectors like finance, healthcare, and law. Additionally, it helps mitigate reputational risks by adhering to societal norms and values.

You can learn more by visiting RagaAIs enterprise-ready Testing Platform: raga.ai/llms

Todays launch comes following RagaAI's successful funding round in January 2024, underscoring the company's momentum and commitment to advancing LLMs quality assurance.

About RagaAI

RagaAI is the #1 AI Testing platform dedicated to advancing AI quality assurance. Founded on the belief that AI systems should be robust, responsible, and ready for the future, RagaAI develops cutting-edge solutions to detect, diagnose, and resolve AI issues seamlessly. With a commitment to innovation and excellence, RagaAI is redefining the standards of LLM evaluation worldwide.To learn more, please visit: https://raga.ai/

Contact Details

RagaAI

Bilal Mahmood

+44 7714 007257

b.mahmood@stockwoodstrategy.com

Company Website

View source version on newsdirect.com: https://newsdirect.com/news/reliable-and-trustworthy-llms-ragaai-open-sources-the-most-comprehensive-platform-for-evaluation-and-guardrails-315470990

RagaAI

COMTEX_448904906/2655/2024-03-07T13:04:54

Disclaimer: The views, suggestions, and opinions expressed here are the sole responsibility of the experts. No DigiShor journalist was involved in the writing and production of this article.

© 2017 VictorThemes - Elite Themeforest Author.